Data is all around us: the things we buy, the articles we read, and the content we enjoy. The “digital footprints” that we’re all leaving are immense: we’re nearing a point when This insurmountable amount of information, therefore, has paved the way for some awesome technological innovations: natural language processing, for instance, thrives on the rich textual data available on the internet.

This raises an interesting question: “How do we acquire this data?” The answer lies in web scraping which typically implies automated process of gathering data from the internet. Web scraping, however, isn’t always a “white hat” (i.e. abiding by the law and terms of use) practice — it can be utilized aggressively, causing potential damage to the donor resource.

The focus of this article, therefore, is ethical web scraping — acquiring the data you need without becoming Dr. Evil. In this article, we’ll explore the definition of web scraping, how it works, its use cases, legal and ethical issues — and how to avoid them by scraping responsibly.

Getting the definition right

Before we begin, let’s define this term. Web scraping (also known as web harvesting or web data extraction) is the process of extracting data from web-based resources. This brief definition holds a few key points which can help us understand it even better:

- Web-based resources refer to collections/networks of websites.

- Data can refer to texts, images, videos, and so on.

OK. So how does it work?

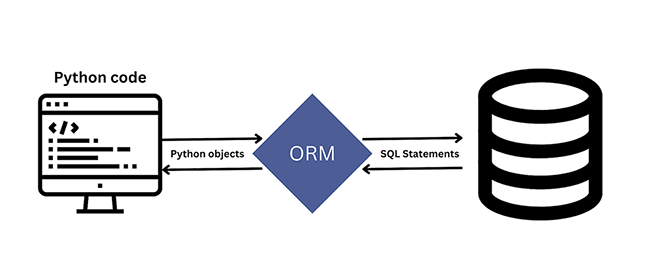

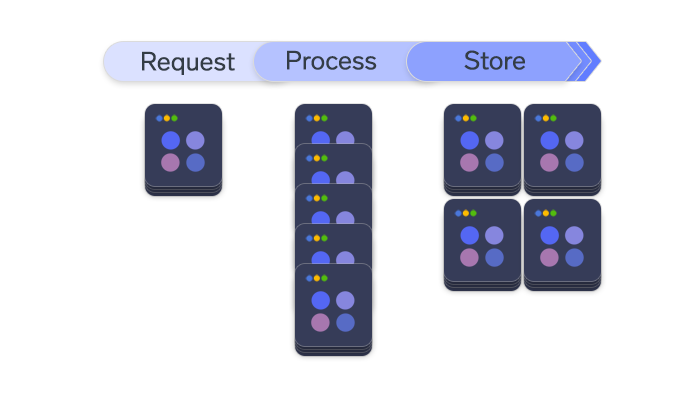

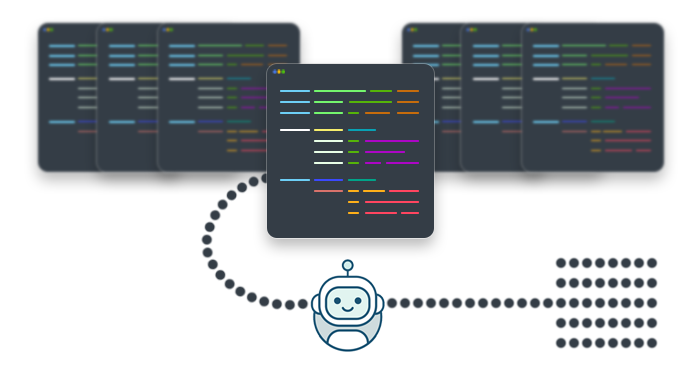

The power of everything digital lies in the way data is organized — in a strict system, that is. The web is no exception: the “Markup” in “Hypertext Markup Language” refers to the way raw data is marked up and ready for access. In its basic form, web scraping is organized in the following manner:

- Make a request to the URL which contains the necessary HTML data.

- Using a scraping tool (e.g. Scrapy), parse the HTML → find the element with particular data you’re looking for (e.g. the picture’s alt text) → extract the data.

Naturally, proficiency in web scraping comes with great benefits: for one, the entire internet pretty much becomes your database It’s tempting to think that scraping is equivalent to using APIs — after all, they produce similar functionality. However, it’s not so simple…

The ethical and legal issues

Site owners are perfectly justified when they try to protect themselves from scraping — otherwise, their websites are running these risks:

- Content can be stolen, granting the thief a competitive advantage.

- Website can crash (as scraping bots, when sending requests en masse, can accidentally create a DDoS-like attack on the web server)

- Website’s algorithms and inner workings (especially if it’s a social media platform) can be exploited and manipulated.

- and much, much more.

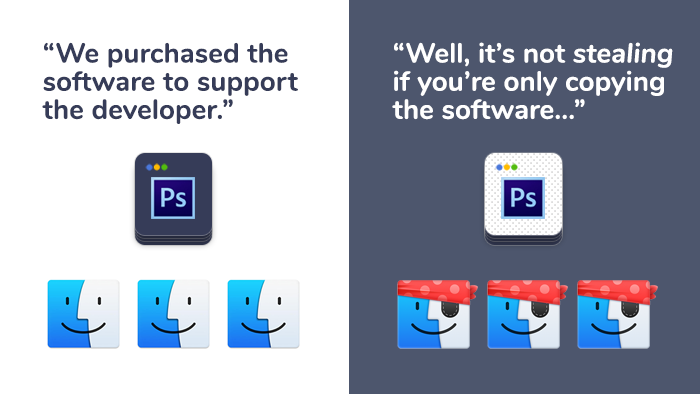

Naturally, “evil” scrapers disregard the websites’ rules and terms of service completely — but they often fail to realize that their strategy won’t be profitable in the long run. As web scraping involves copying of files, it introduces a curious ethical (and almost philosophical) challenge:

- In Scenario A, the thief steals an apple (or an Apple computer, for that matter). This particular apple is unique and singular — stealing it from its owner means it went from one person to another.

- In Scenario B, the thief steals a copy of Adobe Photoshop. The software is unique as well — but it is simultaneously abstract, so it’s impossible to force the owner to give up their possession of the software.

Some people, therefore, argue: “It is impossible to truly steal software: stealing involves the owner ultimately losing the possession, but you’re merely making a copy of it.”

Site owners, on the other hand, aren’t too keen on debating the ethics of web scraping, so many of them view all kinds of scraping as harmful. In theory, we could turn to some legal systems or web standards to define the boundaries — in practice, however, our laws are still adapting, so we’ve yet to get the definitive answer.

In the United States, for instance, legal claims like copyright infringement can, in theory, protect the resource from being scraped: over the last two decades, many companies (including eBay, American Airlines, Associated Press, Facebook, LinkedIn, and more) have tried to safeguard their online property — but different judges ruled different decisions, rendering web scraping’s legal status uncertain.

These legal cases showcase that web scraping is still very much in the grey zone. This lack of regulations is hurting both the webmasters and white-hat scrapers. When it comes to massive scraping projects, therefore, consulting lawyers is absolutely mandatory; for smaller projects, the resource’s terms of use can provide the guidelines detailing how to collect data without pissing everyone off.

Methods of web scraping prevention

For webmasters dissatisfied with how their resources are constantly being crawled, there are quite a few tricks and systems to safeguard their websites against scraping. Here are some of them:

- Honeypots are computer security mechanism disguised as a real part of the website which is isolated and monitored. It lures bots, allowing webmasters to track the source of attack and block it.

- By extension, it’s possible to block IP addresses of bots based on specific criteria (e.g. geolocation).

- Some companies resort to disabling the APIs: as detailed in our article about bots in social media and messengers, Instagram chose exactly this option.

- CAPTCHA services have been battling bots for a long time; Google’s recent reCAPTCHA v3 (I’m not a robot), however, seems to finally be able to put an end to botting once and for all.

- Application firewalls and other commercial anti-bot services.

- The website can also be obfuscated via small variations to the HTML/CSS code. This confuses bots as they’re designed to work with clear HTML structure.

- robots.txt can be used to explicitly indicate that crawling is not allowed (or allowed only partially, or the crawl rate is limited).

Guidelines for ethical and responsible web scraping

These guidelines come directly from the “Don’t be evil” approach which you can easily formulate by yourself; the main idea is this: in the long run, collaboration and respect between the scraper and the site owner trump greed and egoism.

- Prefer APIs over explicit scraping (when possible, of course; as discussed above, providing a working API is sometimes not an option for large companies).

- Each website is unique — articles like ours do provide you with a general understanding, but different webmasters impose different requirements. Speaking of requirements…

- The website’s Terms of Service is the source of everything you’d need to know when working with the given resource. The more instances of different ToS you’ll read, the better you’ll understand what generally constitutes as responsible scraping. Website’s robots.txt can also provide similar information.

- Set an adequate craw rate (i.e. the number of requests you send in X seconds). Adequate isn’t a fixed number; webmasters usually specify it in the robots.txt. If robots.txt doesn’t provide this information, it’s reasonable to set the crawl rate to 1 request per 10-15 seconds.

- When in doubt, ask. The scraping approach you opt for might look conservative to you, but the webmasters can disagree. Therefore, you can always contact the website’s team to ask for clarifications or even try and negotiate.

- Identify your scraper bot via a legitimate user agent string. This indicates that your intentions are, in fact, not malicious. In the user agent string, you can also link to a page explaining why you need the data.

Web scraping use cases

The issues, problems, and caveats we’ve outlined above do seem pretty challenging — but the power behind web scraping is absolutely worth it. If you ever yourself wondering, what is web scraping used for?, these use cases can give you a great answer:

Aggregators collect categorized data from multiple sources and present it to their users. Platforms like TripAdvisor, booking.com, and Flightradar24 all utilize publicly available data and package it for the end-user’s convenience.

Lead generators can be used to harness information about business and individuals from websites like LinkedIn; this information typically includes names, email addresses, phone numbers, etc.

Scientific research thrives on rich data sets: the more information available, the better and more precise the research’s outcome will be. By extension, commercial research (the study of competitors and their products) also needs a lot of domain-specific information.

The latest advancements in Machine learning have become possible, in part, thanks to the increasing amount of information available on the web — it can train machine learning models

Web scraping tools and technologies

The tool can absolutely make or break the web scraping process, so it’s crucial to ensure that you’re using the right tool for the job. In terms of programming languages, Python and JavaScript are the most popular choices: even though they’re vastly different, their strengths and advantages ensure that you can use either of them and fine-tune to your needs.

Honorable mentions: As regular scrapers can only interact with HTML, you might need to utilize software like Selenium to process JavaScript; Selenium, in essence, works like a “fake browser” and can operate where simpler parsers fail. Also, Puppeteer can be useful because it can automate regular user actions in the browser. Powered by node.js, it excels at dynamically loading pages — for instance, those utilizing Ajax — and Single Page Applications (web apps built with Angular, React, or Vue).

Web scraping libraries in JavaScript

Naturally, the popularity of JavaScript (and, by extension, Node.js) allowed for a number of awesome web scraping libraries. Let’s take a closer look at them:

Request is an HTTP client known for its simplicity and efficiency. It allows the developer to make HTTP calls quickly and easily, with an additional support for HTTPS redirects. Here’s how to get page content using Request:

const request = require('request');

request('http://stackabuse.com', function(err, res, body) {

console.log(body);

});

Osmosis can process AJAX/CSS content, log URLs, operate on cookies, and more. Despite its rich functionality, it doesn’t overburden you with numerous dependencies (unlike Cheerio). Here’s how you can gather some basic page information:

osmosis

.get(url)

.set({

heading: 'h1',

title: 'title'

})

.data(item => console.log(item));

Cheerio is an implementation of core jQuery which excels at parsing markup content; additionally, it offers an API to process and manipulate the data structure you acquire. Here’s how you can load the HTML object and find the parent of the first selected element:

var request = require('request');

var cheerio = require('cheerio');

request('http://www.google.com/', function(err, resp, html) {

if (!err){

const $ = cheerio.load(html);

console.log(html);

}

});

$('.testElement').parent().attr('id')

//=> testParent

Lastly, the Apify SDK is the most powerful tool that comes to rescue when other solutions fall flat during heavier tasks: performing a deep crawl of the whole web resource, rotating proxies to mask the browser, scheduling the scraper to run multiple times, caching results to prevent data prevention if code happens to crash, and more. Apify handles such operations with ease — but it can also help to develop web scrapers of your own. Here’s a simple Hello World implementation:

/**

* Run the following example to perform a recursive crawl of a website using Puppeteer.

*

* To run this example on the Apify Platform, select the `Node.js 8 + Chrome on Debian (apify/actor-node-chrome)` base image

* on the source tab of your actor configuration.

*/

const Apify = require('apify');

Apify.main(async () => {

const requestQueue = await Apify.openRequestQueue();

await requestQueue.addRequest({ url: 'https://www.iana.org/' });

const pseudoUrls = [new Apify.PseudoUrl('https://www.iana.org/[.*]')];

const crawler = new Apify.PuppeteerCrawler({

requestQueue,

handlePageFunction: async ({ request, page }) => {

const title = await page.title();

console.log(`Title of ${request.url}: ${title}`);

await Apify.utils.enqueueLinks({ page, selector: 'a', pseudoUrls, requestQueue });

},

maxRequestsPerCrawl: 100,

maxConcurrency: 10,

});

await crawler.run();

});

Web scraping libraries & frameworks in Python

Python has become the standard: boasting both simplicity and power (qualities that really shine during Python technical interviews), it’s the go-to language to power web scraping. As for the tools themselves, it may be tempting to compare them and claim Tool A reigns supreme! Everyone, abandon your Tools B and Tools C before it’s too late! However, we should keep in mind that every web scraping tool has its pros and cons, so do remember to ensure that you’ve picked the right tool for the job.

Scrapy is pretty much Django for web scraping (to understand the comparison better, check our Django interview questions out!): with its batteries included approach, it’ll probably manage to satisfy your project’s requirements. The tricky part, however, is understanding when not to apply Scrapy: choosing it for a simple project would be similar to building a full-blown PWA in React for a simple blog. Scrapy, therefore, excels at large projects — it’s extremely well-optimized, CPU- and memory-wise. Here’s how to scrape famous quotes from a web resource we specify:

import scrapy

class QuotesSpider(scrapy.Spider):

name = 'quotes'

start_urls = [

'http://quotes.toscrape.com/tag/humor/',

]

def parse(self, response):

for quote in response.css('div.quote'):

yield {

'text': quote.css('span.text::text').get(),

'author': quote.xpath('span/small/text()').get(),

}

next_page = response.css('li.next a::attr("href")').get()

if next_page is not None:

yield response.follow(next_page, self.parse)

Urllib is a handy little library for working with URLs, included in Python’s standard library. Its modules are designed to simplify all operations that are typically carried out with URLs. Here’s what they can do:

urllib.requestopens and reads URL.

urllib.errorprovides the exceptions that can be raised by.request

urllib.parseparses URLs.

urllib.robotparserparses robots.txt files.

Here’s how to open the main page of python.org and display its first 300 bytes:

import urllib.request

with urllib.request.urlopen('http://www.python.org/') as f:

print(f.read(300))

b'<!DOCTYPE html PUBLIC "-//W3C//DTD XHTML 1.0 Transitional//EN"

"http://www.w3.org/TR/xhtml1/DTD/xhtml1-transitional.dtd">\n\n\n<html

xmlns="http://www.w3.org/1999/xhtml" xml:lang="en" lang="en">\n\n<head>\n

<meta http-equiv="content-type" content="text/html; charset=utf-8" />\n

<title>Python Programming '

Conclusion

All in all, web scraping is worth the effort — many companies have built successful businesses around the ability to gather data and serve it where it’s needed most. Chances are, one of your projects might capitalize on scraping capabilities — so once you finally start harvesting all the data available on the web, do make sure to do so responsibly! 🙂