Brute forcing is one of the oldest tricks in the black hat hacker’s handbook. The most predominant way of performing a brute force attack is configuring a set of predetermined values, making requests to a server (in this case, your API) and analysing the response.

For efficiency’s sake, an attacker would probably prefer a dictionary attack. A ‘dictionary’ is a gigantic list of passwords (these can simply be commonly-used passwords or perhaps values amalgamated from past data breaches).

If the attacker has reason to believe traditional brute force attacks might be more efficient, however, they might use a more traditional (and far less efficient) approach – testing random classes of characters – a random mix of alphanumeric and special characters in the hopes they get lucky.

If you have a login or registration form on your website, you’re already a target. No login form should ever be released to the wild without at least some protection in place.

In this article, we cover exactly that – the basics of what rate limiting is and how it can be implemented in NodeJS and how it adds an extra layer security to your API.

What is rate limiting

Rate limiting is a technique used to control the flow of data to and from a server by limiting the number of interactions a user can have with your API. This prevents a single user from using up too many resources (either accidentally or on purpose).

For example, your SaaS messaging app could limit unregistered users to 1,000 API requests per day. Any further requests are denied.

In order to control this flow of data this way, we first need to define criteria for how to prevent excessive requests from reaching our business logic code. How do we identify who needs to be blocked? Commonly-used identifiers are:

- A user id: a user id would be most effective for a service that authorizes users and assigns them unique identifiers. Perhaps your service has fixed limits that have prior been communicated to the end user.This would also be a good choice if your service doesn’t collect user data like IP addresses and location data. Note that any unique value can serve as a ‘user id’, including private/secret keys and usernames.

- IP address: this method works best if you don’t have any other way to uniquely identify a user. While it works very well in some cases, you should be careful not to inadvertently block users with shared IP addresses.

- Geolocation: most malware attacks are known to originate from a handful of countries. With rate limiting, these countries can be isolated and blocked without affecting users from other regions.

Under the hood: rate-limiting algorithms

There are several algorithms that can be used to rate limit user interactions, each of which has its unique drawbacks and advantages.

Leaky bucket algorithm

There are two different ways the leaky bucket algorithm is commonly described: as a queue or as a metre, but we won’t get into the specifics of the latter. Instead, let’s consider a bucket filled to the brim with water but has a hole at the bottom.

Important characteristics of this bucket to note are:

- The water leaks at a (reasonably) constant rate.

- If a large amount of water is added to the bucket at once, the water overflows. However, this doesn’t affect the leak rate.

- If the average rate of pouring water into the bucket is larger than the leak rate, the bucket will overflow. Again, this doesn’t affect the leak rate.

The leaky bucket algorithm borrows from this analogy.

When the API gets a request, it’s added to a queue, and at regular intervals, the requests are processed. If there are too many requests, the last ones in the queue will be ignored. It’s essentially a FIFO (first-in first-out) queue.

One great advantage this algorithm offers is that it can smooth out processing of requests to an approximately average rate. It’s also easy to implement on a single server or load balancer and is quite memory efficient thanks to the limited queue size.

The leaky bucket algorithm does have its disadvantages, though. For instance, a sudden burst of legitimate traffic means newer requests are going to be starved of attention since the queue is filled up. This will have the unintended consequence of making the user experience rather slow.

Token bucket algorithm

In the token bucket algorithm, we create a ‘bucket’ for every user and fill it with a certain number of ‘tokens’. Every time a user makes a request to our API, we subtract some tokens from their bucket and update it with a timestamp indicating when the update happened.

After a fixed period of time (referred to as the window), the bucket is refilled with tokens, essentially resetting a user’s usage back to zero

A major advantage of the token bucket algorithm is that it accounts for burstiness. It’s not adversely affected by a sudden burst in traffic the same way the leaky bucket algorithm is.

For the sake of conciseness, this article won’t cover the fixed window, sliding window and the sliding window log algorithms. While simple to implement, they tend to be very inefficient. However, Figma covers them in-depth with the added advantage of some practical considerations before shipping to production.

Preventing endpoint brute forcing in NodeJS

Not all endpoints in your app need to be protected against brute forcing. For example, a private endpoint protected with a JWT token for internal use isn’t the highest-target priority. Adding some form of rate limiting might be highly advantageous in case of a rogue actor, however.

First, let’s set up a dummy project with express

npx --package express-generator express

We could write the whole algorithm ourselves, but there’s no need to re-invent the wheel. We’ll rely on express-brute for our simple implementation.

npm install redis ms express-brute express-brute-redis --save

- redis – our in-memory cache

- ms – helps to convert units to milliseconds

- express-brute – node implementation of rate limiting algorithm

Here is our folder structure (ignoring a bunch of files that express included but we don’t need)

. ├── app.js ├── bin │ └── www ├── middleware │ ├── index.js │ └── rateLimiter.js ├── package.json ├── package-lock.json ├── routes │ └──index.js

In the middleware folder,

//index.js

module.exports.limiter = require('./rateLimiter');

//rateLimiter.js

const ms = require('ms');

const redis = require('redis');

const ExpressBrute = require('express-brute'),

RedisStore = require('express-brute-redis');

const handleStoreError = function (error) {

console.error(error); // log this error so we can figure out what went wrong

// cause node to exit, hopefully restarting the process fixes the problem

throw {

message: error.message,

parent: error.parent

};

};

const redisClient = redis.createClient(); // Create a new

redisClient.on('connect', function () {

console.log("Connected to redis")

});

redisClient.on('error', function () {

console.log("Redis crashed.")

})

const store = new RedisStore({

client: redisClient,

});

module.exports = new ExpressBrute(store, {

freeRetries: 3,

minWait: ms('10s'),

maxWait: ms('1min'),

handleStoreError

});

Brute forcing is one of the oldest tricks in the black hat hacker’s handbook. The most predominant way of performing a brute force attack is configuring a set of predetermined values, making requests to a server (in this case, your API) and analysing the response.

For efficiency’s sake, an attacker would probably prefer a dictionary attack. A ‘dictionary’ is a gigantic list of passwords (these can simply be commonly-used passwords or perhaps values amalgamated from past data breaches).

If the attacker has reason to believe traditional brute force attacks might be more efficient, however, they might use a more traditional (and far less efficient) approach – testing random classes of characters – a random mix of alphanumeric and special characters in the hopes they get lucky.

If you have a login or registration form on your website, you’re already a target. No login form should ever be released to the wild without at least some protection in place.

In this article, we cover exactly that – the basics of what rate limiting is and how it can be implemented in NodeJS and how it adds an extra layer security to your API.

What is rate limiting

Rate limiting is a technique used to control the flow of data to and from a server by limiting the number of interactions a user can have with your API. This prevents a single user from using up too many resources (either accidentally or on purpose).

For example, your SaaS messaging app could limit unregistered users to 1,000 API requests per day. Any further requests are denied.

In order to control this flow of data this way, we first need to define criteria for how to prevent excessive requests from reaching our business logic code. How do we identify who needs to be blocked? Commonly-used identifiers are:

- A user id: a user id would be most effective for a service that authorizes users and assigns them unique identifiers. Perhaps your service has fixed limits that have prior been communicated to the end user.This would also be a good choice if your service doesn’t collect user data like IP addresses and location data. Note that any unique value can serve as a ‘user id’, including private/secret keys and usernames.

- IP address: this method works best if you don’t have any other way to uniquely identify a user. While it works very well in some cases, you should be careful not to inadvertently block users with shared IP addresses.

- Geolocation: most malware attacks are known to originate from a handful of countries. With rate limiting, these countries can be isolated and blocked without affecting users from other regions.

Under the hood: rate-limiting algorithms

There are several algorithms that can be used to rate limit user interactions, each of which has its unique drawbacks and advantages.

Leaky bucket algorithm

There are two different ways the leaky bucket algorithm is commonly described: as a queue or as a metre, but we won’t get into the specifics of the latter. Instead, let’s consider a bucket filled to the brim with water but has a hole at the bottom.

Important characteristics of this bucket to note are:

- The water leaks at a (reasonably) constant rate.

- If a large amount of water is added to the bucket at once, the water overflows. However, this doesn’t affect the leak rate.

- If the average rate of pouring water into the bucket is larger than the leak rate, the bucket will overflow. Again, this doesn’t affect the leak rate.

The leaky bucket algorithm borrows from this analogy.

When the API gets a request, it’s added to a queue, and at regular intervals, the requests are processed. If there are too many requests, the last ones in the queue will be ignored. It’s essentially a FIFO (first-in first-out) queue.

One great advantage this algorithm offers is that it can smooth out processing of requests to an approximately average rate. It’s also easy to implement on a single server or load balancer and is quite memory efficient thanks to the limited queue size.

The leaky bucket algorithm does have its disadvantages, though. For instance, a sudden burst of legitimate traffic means newer requests are going to be starved of attention since the queue is filled up. This will have the unintended consequence of making the user experience rather slow.

Token bucket algorithm

In the token bucket algorithm, we create a ‘bucket’ for every user and fill it with a certain number of ‘tokens’. Every time a user makes a request to our API, we subtract some tokens from their bucket and update it with a timestamp indicating when the update happened.

After a fixed period of time (referred to as the window), the bucket is refilled with tokens, essentially resetting a user’s usage back to zero

A major advantage of the token bucket algorithm is that it accounts for burstiness. It’s not adversely affected by a sudden burst in traffic the same way the leaky bucket algorithm is.

For the sake of conciseness, this article won’t cover the fixed window, sliding window and the sliding window log algorithms. While simple to implement, they tend to be very inefficient. However, Figma covers them in-depth with the added advantage of some practical considerations before shipping to production.

Preventing endpoint brute forcing in NodeJS

Not all endpoints in your app need to be protected against brute forcing. For example, a private endpoint protected with a JWT token for internal use isn’t the highest-target priority. Adding some form of rate limiting might be highly advantageous in case of a rogue actor, however.

First, let’s set up a dummy project with express

npx --package express-generator express

We could write the whole algorithm ourselves, but there’s no need to re-invent the wheel. We’ll rely on express-brute for our simple implementation.

npm install redis ms express-brute express-brute-redis --save

- redis – our in-memory cache

- ms – helps to convert units to milliseconds

- express-brute – node implementation of rate limiting algorithm

Here is our folder structure (ignoring a bunch of files that express included but we don’t need)

. ├── app.js ├── bin │ └── www ├── middleware │ ├── index.js │ └── rateLimiter.js ├── package.json ├── package-lock.json ├── routes │ └──index.js

In the middleware folder,

//index.js

module.exports.limiter = require('./rateLimiter');

//rateLimiter.js

const ms = require('ms');

const redis = require('redis');

const ExpressBrute = require('express-brute'),

RedisStore = require('express-brute-redis');

const handleStoreError = function (error) {

console.error(error); // log this error so we can figure out what went wrong

// cause node to exit, hopefully restarting the process fixes the problem

throw {

message: error.message,

parent: error.parent

};

};

const redisClient = redis.createClient(); // Create a new

redisClient.on('connect', function () {

console.log("Connected to redis")

});

redisClient.on('error', function () {

console.log("Redis crashed.")

})

const store = new RedisStore({

client: redisClient,

});

module.exports = new ExpressBrute(store, {

freeRetries: 3,

minWait: ms('10s'),

maxWait: ms('1min'),

handleStoreError

});

freeRetries– the number of tries a user has before they are ‘blacklisted’minWait– the time a user has to wait when they run out of retries for the first timemaxWait– the maximum amount of time a user will have to waithandleStoreError– handle the error if anything goes wrong with Redis

In pseudocode:

- A can incorrectly enter their username/password combination three times (

freeRetries). - The first time they run out of retries, the user will wait for 10 seconds before being able to try again.

- If they enter their username/password incorrectly three times again they will have to wait a little longer than 10 seconds (the exact time is determined by the library)

- The maximum wait time is 1 minute.

- Another interesting way to think of the above code is ‘10 requests every 1 minute’ (

minWaiteverymaxWait). However, this only works if yourminWaitis in seconds.

Voila! We’re done!

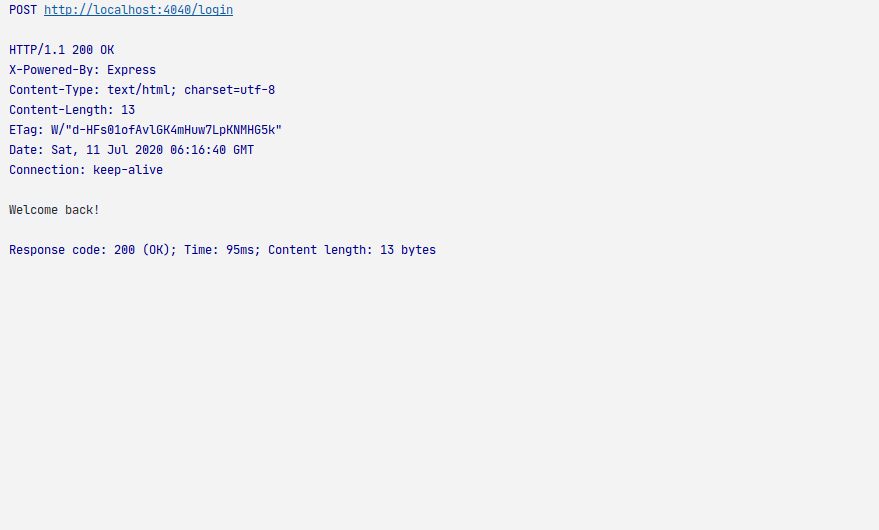

How does this look in practice? (For this test, I set the following arbitrary values freeRetries=1 minWait=1s, maxWait=10s)

When we hit the API for the first time, everything goes smoothly:

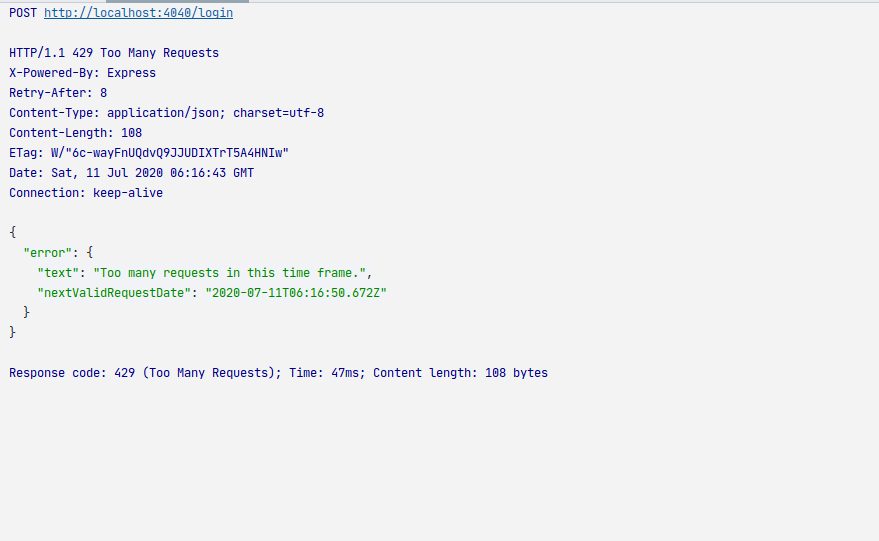

If we try again within 10 seconds:

Notice the Retry-After header. This tells the client they are allowed to try again in ‘x seconds’ (9 seconds in this case).

If you wanted to change the header to something like ‘X-Rate-Limit’, use the ‘failAction’ method in the library to specify a custom header.

For the github code: https://github.com/Bradleykingz/node-rate-limiter-tutorial

Conclusion

In this article, we covered the basics of rate limiting and how it can be used to secure your API against malicious actors. It’s important to note, however that rate limiting isn’t the only way to mitigate brute force attacks and can be, in fact, downright useless against a determined attacker.

The methods of attack are often complex and varied, as described by the Open Web Application Security Project (OWASP). As such, rate limiting isn’t a silver bullet for all your anti-brute-forcing needs.

For a more detailed overview of the topic and the various methods you can use to keep your services safe, read this other guide by OWASP.