Developed by OpenAI, DALL·E has emerged as a groundbreaking generative AI model capable of transforming textual prompts into diverse and imaginative images. DALL·E builds upon the success of its predecessor GPT models by introducing a novel approach to image generation, and opening up a range of possibilities for creative expression, design, and visual storytelling.

Dall-E employs several cutting-edge technologies, such as natural language processing (NLP), large language models (LLMs), and diffusion processing. Developed with a subset of the GPT-3 LLM, Dall-E differs by utilizing only 12 billion parameters, a deliberate optimization for image generation, in contrast to GPT-3’s complete set of 175 billion parameters.

The Need for Customization: While the pre-trained capabilities of DALL·E are impressive, customization becomes essential when your applications demand a more personalized touch. For developers and AI enthusiasts eager to explore the customization capabilities of DALL·E, this guide addresses in detail the nuances of adapting DALL·E to your specific requirements.

Overview of DALL·E’s Neural Architecture

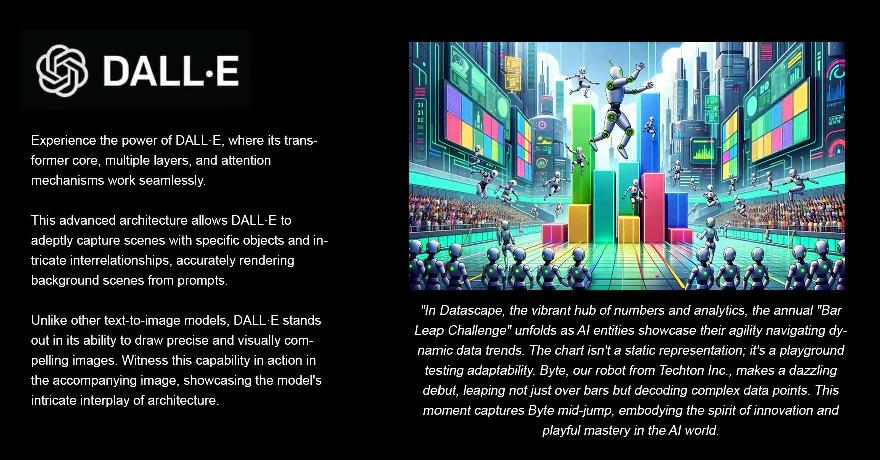

The adaptability of DALL·E across diverse datasets is it’s key strength and that’s where DALL·E’s neural network design stands out for its ability to generate highly accurate images based on textual prompts. Understanding how DALL·E interprets various text inputs is fundamental for effectively utilizing it with custom dataset scenarios.

The Transformer Core

DALL·E operates on a transformer-based architecture, inheriting the success and adaptability of OpenAI’s GPT models. This choice of architecture is understandable, as transformers are historically well-suited for processing sequential data.

In practical terms, the transformer-based foundation provides DALL·E with the capability to efficiently process information in a parallelized manner, facilitating the translation of textual descriptions into coherent and contextually relevant images.

Layers & Attention Mechanisms

Within the transformer-based architecture, the layers and attention mechanisms are some of the integral components that contribute to the model’s ability to generate high-quality images.

- Layers: DALL·E’s architecture consists of multiple layers, each responsible for processing and transforming input data hierarchically. As the textual information passes through the transformer layers, it undergoes transformations and feature extraction. Each layer contributes to shaping the final image representation.

- Attention Mechanisms: The presence of attention mechanisms allows DALL·E to focus on different parts of the input text, enhancing its capacity to capture intricate details and relationships.

Creating a Virtual Environment

Setting up the environment for training DALL·E involves creating a virtual environment, installing necessary libraries, and preparing the dataset. Creating a dedicated workspace also ensures that your DALL·E project operates in abstraction from system-wide libraries.

In the root directory of your project, execute the following commands in your terminal:

# Create a virtual environment named 'dalle_venv' virtualenv dalle_venv # Activate the virtual environment source dalle_venv/bin/activate

We have now isolated our project by providing a controlled virtual environment named dalle_venv. Every time you work on your DALL·E project, activate the virtual environment using the source dalle_venv/bin/activate command in your terminal.

Installing Dependencies

Install the required libraries, including the DALL·E OpenAI SDK, PyTorch, and other supporting libraries:

# Install DALL·E OpenAI SDK pip install openai # Install PyTorch pip install torch torchvision torchaudio # Install other necessary libraries pip install opencv-python numpy matplotlib

The openai library will serve as the interface for interacting with the DALL·E OpenAI API. PyTorch (torch, torchvision, torchaudio) is a widely used open-source deep learning library equipped with tools for building and training neural networks. It forms the core of any project involving custom datasets, performing forward and backward passes during training, and optimizing model parameters.

In addition to PyTorch, we install other necessary libraries — opencv-python, numpy, and matplotlib. The OpenCV library provides image processing and computer vision tasks, offering tools for handling image input/output. NumPy, a numerical library, handles array manipulations and mathematical operations. Lastly, Matplotlib is a versatile plotting library, and revolves around visualizing images, training progress, and evaluation metrics within our DALL·E project.

Preparing the Dataset

Before creating a custom dataset class, we need to organize our dataset with a clear directory structure. Consider the following structure:

custom_dataset/

├── class1/

│ ├── img1.jpg

│ ├── img2.jpg

│ └── ...

├── class2/

│ ├── img1.jpg

│ ├── img2.jpg

│ └── ...

└── ...

Create a Custom Dataset Class

Now, let’s create a custom dataset class using the DALL·E OpenAI SDK. This class will handle the loading and transformation of images.

import os

from PIL import Image

from torch.utils.data import Dataset

import openai

class CustomDataset(Dataset):

def __init__(self, root_dir, api_key, transform=None):

"""

CustomDataset constructor.

Parameters:

- root_dir (str): Root directory containing the organized dataset.

- api_key (str): Your OpenAI API key for DALL·E interaction.

- transform (callable, optional): A function/transform to apply to the images.

"""

self.root_dir = root_dir

self.transform = transform

self.img_paths = self._get_img_paths()

self.api_key = api_key

def _get_img_paths(self):

"""

Private method to retrieve all image paths within the specified root directory.

"""

return [os.path.join(root, file) for root, dirs, files in os.walk(self.root_dir) for file in files]

def __len__(self):

"""

Returns the total number of images in the dataset.

"""

return len(self.img_paths)

def __getitem__(self, idx):

"""

Loads and returns the image at the specified index, with optional transformations.

Parameters:

- idx (int): Index of the image.

Returns:

- image (PIL.Image): Loaded image.

"""

img_path = self.img_paths[idx]

image = Image.open(img_path).convert('RGB')

if self.transform:

image = self.transform(image)

return image

def generate_prompt(self, image_path):

"""

Generates a prompt based on the image path.

Parameters:

- image_path (str): Path to the image.

Returns:

- prompt (str): Generated prompt.

"""

return f"Generate an image based on the contents of {image_path}"

def generate_image(self, image_path):

"""

Generates an image using DALL·E based on the provided image path.

Parameters:

- image_path (str): Path to the image.

Returns:

- generated_image_url (str): URL of the generated image.

"""

prompt = self.generate_prompt(image_path)

response = openai.Image.create(file=image_path, prompt=prompt, n=1, model="image-alpha-001")

generated_image_url = response['data'][0]['url']

return generated_image_url

- Constructor (__init__): The constructor initializes the dataset with the root directory, OpenAI API key, and an optional transform function for image preprocessing.

- _get_img_paths: This private method dynamically retrieves all image paths within the specified root directory, also ensures the dataset class adapts to changes in the dataset.

- __len__: Returns the total number of images in the dataset, facilitating easy determination of the dataset size.

- __getitem__: Loads and returns an image at a specified index. Applies optional transformations using the provided transform function.

- generate_prompt: Generates a prompt based on the image path. This prompt guides DALL·E in generating images that align with the content of the specified image.

- generate_image: Utilizes DALL·E to generate an image based on the provided image path and prompt. Returns the URL of the generated image.

Diagrammatic View of the Class CustomDataset

+----------------------------------------+ +----------------------------------------+ +----------------------------------------+ | CustomDataset | | Dataset | | OpenAI | |----------------------------------------| |----------------------------------------| |----------------------------------------| | - root_dir: str | | - root_dir: str | | - Image.create(...) | | - transform: callable | | - transform: callable | |----------------------------------------| | - img_paths: list | | - img_paths: list | | + create(...) | | - api_key: str | +----------------------------------------+ +----------------------------------------+ |----------------------------------------| | | + _get_img_paths() | v | + __len__() | +----------------------------------------+ | + __getitem__(idx) | | Image | | + generate_prompt(image_path) | |----------------------------------------| | + generate_image(image_path) | | - create(...) | +----------------------------------------+ +----------------------------------------+

Training DALL·E

The process of training is all about the model learning the intricate patterns, features, and styles embedded within a given dataset. In this example, we’ll use the OpenAI API for training.

import openai

def train_dalle(api_key, image_paths):

openai.api_key = api_key

# Set up training configuration

training_config = {

"num_images": len(image_paths),

"image_paths": image_paths,

"model": "image-alpha-001",

"steps": 1000,

"learning_rate": 1e-4

}

# Start training

response = openai.Image.create(**training_config)

# Check training status

if response['status'] == 'completed':

print("DALL·E training completed successfully.")

else:

print("DALL·E training failed. Check the OpenAI API response for details.")

print(response)

Initiate the training process by providing the API key and the paths to your images.

# Example usage api_key = "your_openai_api_key" dataset_path = "path/to/custom_dataset" custom_dataset = CustomDataset(root_dir=dataset_path, api_key=api_key) image_paths = custom_dataset.img_paths # Train DALL·E train_dalle(api_key=api_key, image_paths=image_paths)

Here’s a little breakdown of our code.

Setting Up API Key: The initial step involves setting up the OpenAI API key. It’s the access point that allows the script to send requests for training and receive responses.

import openai # Set up OpenAI API key openai.api_key = api_key

Defining Training Configuration: The training process relies on the configuration parameters. The training_config dictionary contains the following:

- num_images: The total number of images in the dataset.

- image_paths: The paths to the images in the dataset.

- model: Specifies the DALL·E model to be used (e.g., “image-alpha-001”).

- steps: The number of training steps to iterate over the dataset.

- learning_rate: The learning rate, determining the size of steps taken during optimization.

# Define training configuration

training_config = {

"num_images": len(image_paths),

"image_paths": image_paths,

"model": "image-alpha-001",

"steps": 1000,

"learning_rate": 1e-4

}

Initiating Training: The openai.Image.create method is then employed to kickstart the training process. This function sends a request to the DALL·E model, with the necessary configurations. During each training step, DALL·E refines its understanding of the dataset, recognizing unique features and relationships among images.

# Initiate training response = openai.Image.create(**training_config)

Checking Training Status: The script is designed to check the status of the training process. If the training completes successfully, a confirmation message is printed. In case of failure, the script prints details from the OpenAI API response for debugging.

# Check training status

if response['status'] == 'completed':

print("DALL·E training completed successfully.")

else:

print("DALL·E training failed. Check the OpenAI API response for details.")

You can also view the DALL·E training flow from the diagram below:

+-----------------------------+

| Start |

+-----------------------------+

|

v

+-----------------------------+

| Set API Key and Image Paths |

| |

| +-------------------+ |

| | API Key Set | |

| +-------------------+ |

| | Image Paths Set | |

| +-------------------+ |

| |

+-----------------------------+

|

v

+-----------------------------+

| Set Training Configuration |

| |

| +-------------------+ |

| | Num Images Set | |

| +-------------------+ |

| | Image Paths Set | |

| +-------------------+ |

| | Model Set | |

| +-------------------+ |

| | Steps Set | |

| +-------------------+ |

| | Learning Rate Set | |

| +-------------------+ |

| |

+-----------------------------+

|

v

+-----------------------------+

| Start Training |

| |

| +-------------------+ |

| | Training Request | |

| +-------------------+ |

| | | |

| v v |

| Success Failure |

| | | |

| v v |

| Print Success Print Failure

| |

+-----------------------------+

|

v

+-----------------------------+

| End |

+-----------------------------+

How DALL·E Learns:

DALL·E learns to generate images that align with the patterns and features present in the provided dataset. During training, it refines its understanding of the dataset, recognizing unique features and relationships among images.

In the previous example, the training process involves 1000 steps, and the learning rate determines the size of the optimization steps taken during the training iterations. The training dataset, represented by image_paths, is crucial for DALL·E to learn and generalize from the provided images.

Consider a dataset consisting of various landscapes — mountains, beaches, and forests. Training helps DALL·E learn the nuanced details of each landscape type, from the peaks of mountains to the waves of the beach. Allowing the AI model to generate novel, realistic landscapes based on textual prompts.

Monitoring Training Progress

You can further enhance the train_dalle function to include progress monitoring. This will allow you a more dynamic preview into the ongoing training process, and better visibility into the model’s progress.

def monitor_training(api_key, training_job_id):

"""

Monitor the progress of the DALL·E training job.

Parameters:

- api_key (str): Your OpenAI API key.

- training_job_id (str): The ID of the DALL·E training job.

Returns:

None

"""

openai.api_key = api_key

response = openai.Image.retrieve(training_job_id)

# Check training status

if response['status'] == 'completed':

print("DALL·E training completed successfully.")

elif response['status'] == 'failed':

print("DALL·E training failed. Check the OpenAI API response for details.")

print(response['error'])

else:

print(f"Current step: {response['data']['step']}/{response['data']['total_steps']}")

print(f"Progress: {response['progress']}%")

The function, monitor_training, takes the API key and the training job ID as parameters. It retrieves the latest information about the training job using openai.Image.retrieve and then prints relevant details. If the status is ‘completed,’ it prints a success message, and an error message, if failed, along with details. If the training is still in progress, it prints the current step, total steps, and progress percentage.

# Assuming 'job_id' is obtained from the training response monitor_training(api_key=api_key, training_job_id='job_id')

Invoke monitor_training function using the job_id obtained from the response when initiating the training.

Generate Images

Once the DALL·E model is trained, extend the CustomDataset class to incorporate a method for generating images:

import random

import openai

from PIL import Image

import os

from torch.utils.data import Dataset

class CustomDataset(Dataset):

def __init__(self, root_dir, api_key, transform=None):

"""

CustomDataset constructor.

Parameters:

- root_dir (str): Root directory containing the organized dataset.

- api_key (str): Your OpenAI API key for DALL·E interaction.

- transform (callable, optional): A function/transform to apply to the images.

"""

self.root_dir = root_dir

self.transform = transform

self.img_paths = self._get_img_paths()

self.api_key = api_key

def _get_img_paths(self):

"""

Private method to retrieve all image paths within the specified root directory.

"""

return [os.path.join(root, file) for root, dirs, files in os.walk(self.root_dir) for file in files]

def __len__(self):

"""

Returns the total number of images in the dataset.

"""

return len(self.img_paths)

def __getitem__(self, idx):

"""

Loads and returns the image at the specified index, with optional transformations.

Parameters:

- idx (int): Index of the image.

Returns:

- image (PIL.Image): Loaded image.

"""

img_path = self.img_paths[idx]

image = Image.open(img_path).convert('RGB')

if self.transform:

image = self.transform(image)

return image

def generate_prompt(self, image_path):

"""

Generates a prompt based on the image path.

Parameters:

- image_path (str): Path to the image.

Returns:

- prompt (str): Generated prompt.

"""

return f"Generate an image based on the contents of {image_path}"

def generate_image(self, image_path):

"""

Generates an image using DALL·E based on the provided image path.

Parameters:

- image_path (str): Path to the image.

Returns:

- generated_image_url (str): URL of the generated image.

"""

prompt = self.generate_prompt(image_path)

response = openai.Image.create(file=image_path, prompt=prompt, n=1, model="image-alpha-001")

return response['data'][0]['url']

def generate_images(self, num_images=5):

"""

Generate images using the trained DALL·E model.

Parameters:

- num_images (int): Number of images to generate.

Returns:

- generated_images (list): List of URLs of the generated images.

"""

generated_images = []

for _ in range(num_images):

# Choose a random image from the dataset

random_image_path = random.choice(self.img_paths)

generated_image_url = self.generate_image(random_image_path)

generated_images.append(generated_image_url)

return generated_images

The newly added generate_images method operates by choosing random images from your dataset and utilizing DALL·E to generate new images inspired by the chosen ones. The generated images are not mere replicas but imaginative variations shaped by the patterns the model has learned during training.

Call this method in order to generate images once training concludes:

# Instantiate the CustomDataset class with the required parameters

api_key = "your_openai_api_key"

dataset_path = "path/to/custom_dataset"

custom_dataset = CustomDataset(root_dir=dataset_path, api_key=api_key)

# Generate images from the trained model

generated_images = custom_dataset.generate_images(num_images=5)

for image_url in generated_images:

print(image_url)

The process involves selecting random images from your dataset, prompting DALL·E to generate entirely new and unique variations. This step allows you to visually inspect the quality of images generated by DALL·E.

Fine-Tuning Your DALL·E Model

Suppose your objective is to enhance the output of the DALL·E model, tailoring it to highlight specific features, themes, or styles in the generated images. Fine-tuning offers a powerful mechanism to achieve this level of customization.

Create a fine_tune_dalle function to facilitate the fine-tuning process for our DALL·E model.

def fine_tune_dalle(api_key, custom_dataset, fine_tune_config):

openai.api_key = api_key

# Fine-tuning configuration

fine_tune_config["model"] = "image-alpha-001-finetune" # Specify fine-tuning model

fine_tune_config["steps"] = 500 # Adjust steps based on your requirements

# Fine-tune DALL·E

response = openai.Image.create(**fine_tune_config)

# Check fine-tuning status

if response['status'] == 'completed':

print("DALL·E fine-tuning completed successfully.")

else:

print("DALL·E fine-tuning failed. Check the OpenAI API response for details.")

print(response)

It’s also important to modify the generate_prompt method within the CustomDataset class to ensure the generation of prompts align with the objectives of your fine-tuning.

# Modify generate_prompt method for fine-tuning

def generate_prompt(self, image_path, fine_tuning=True):

"""

Generates a prompt based on the image path, considering fine-tuning objectives.

Parameters:

- image_path (str): Path to the image.

- fine_tuning (bool): Flag indicating fine-tuning context.

Returns:

- prompt (str): Generated prompt.

"""

if fine_tuning:

return f"Fine-tune the model to highlight features in {image_path}"

else:

return f"Generate an image based on the contents of {image_path}"

Next, utilize the fine_tune_dalle function by providing the necessary configuration for fine-tuning. Feel free to adjust the parameters, the number of steps, and any other relevant settings based on your specific requirements.

# Fine-tuning configuration

fine_tune_config = {

"num_images": len(fine_tune_dataset.img_paths),

"image_paths": fine_tune_dataset.img_paths,

"model": "image-alpha-001-finetune", # Specify fine-tuning model

"steps": 500 # Adjust steps based on your requirements

}

# Fine-tune DALL·E

fine_tune_dalle(api_key=api_key, custom_dataset=fine_tune_dataset, fine_tune_config=fine_tune_config)

The fine_tune_dalle function utilizes the OpenAI API to perform fine-tuning based on the provided dataset and configuration. The generate_prompt method, modified earlier, contributes to creating prompts tailored for the fine-tuning context.

The generate_prompt method informs the fine-tuning process by generating prompts that guide DALL·E in understanding and highlighting specific features within the curated dataset. The fine_tune_dalle function then executes the fine-tuning based on these prompts.

Integration into Real-World Scenarios

Once you have a well-trained and fine-tuned DALL·E model, the natural thing to do is to see it in action by integrating it into real world applications.

import openai

def integrate_dalle(api_key, custom_dataset, user_prompt):

"""

Integrate a fine-tuned DALL·E model into a real-world application.

Parameters:

- api_key (str): Your OpenAI API key.

- custom_dataset (CustomDataset): Your fine-tuned DALL·E model and dataset.

- user_prompt (str): The user's prompt for image generation.

Returns:

- generated_image_url (str): URL of the generated image.

"""

openai.api_key = api_key

# Choose a random image from the fine-tuned dataset

image_path = custom_dataset.choose_random_image()

# Generate image based on the provided prompt and fine-tuned model

prompt = custom_dataset.generate_prompt(image_path, user_prompt)

response = openai.Image.create(prompt=prompt, n=1, model="image-alpha-001-finetune")

# Extract generated image URL

generated_image_url = response['data'][0]['url']

return generated_image_url

# Example of integrating fine-tuned DALL·E into a real-world application

api_key = "your_openai_api_key"

dataset_path = "path/to/fine_tuned_dataset"

fine_tuned_dataset = CustomDataset(root_dir=dataset_path, api_key=api_key)

user_prompt = "A futuristic cityscape with flying cars"

generated_image_url = integrate_dalle(api_key, fine_tuned_dataset, user_prompt)

print("Generated Image URL:", generated_image_url)

The integrate_dalle function accepts the fine-tuned dataset (fine_tuned_dataset) and the user’s prompt. It randomly selects an image from the fine-tuned dataset, generates a prompt combining the user’s input and the selected image, and then uses the fine-tuned model to create a relevant image.

Compatibility with PyTorch

Our trained DALL·E model should also have no problem integrating with some of the popular machine learning frameworks. Let’s consider PyTorch as an example.

import torch

from torchvision import transforms

from custom_dataset import CustomDataset

# Load your custom-trained DALL·E model

dalle_model = torch.load('path/to/custom_dalle_model.pth')

# Define input transformations compatible with DALL·E

transform = transforms.Compose([

transforms.Resize((256, 256)),

transforms.ToTensor(),

])

# Instantiate your custom dataset for inference

custom_dataset = CustomDataset(root_dir='path/to/inference_dataset', api_key='your_openai_api_key', transform=transform)

# Choose a random image from the inference dataset

input_image = custom_dataset.choose_random_image()

# Transform the input image for DALL·E

input_tensor = transform(input_image).unsqueeze(0)

# Generate output using your custom-trained DALL·E model

with torch.no_grad():

output_image = dalle_model(input_tensor)

# Display or save the generated output as needed

# (e.g., using torchvision.utils.save_image or matplotlib for display)

Incorporate the saved DALL·E model into your PyTorch environment by following a straightforward model loading procedure. Once loaded, transform input images using PyTorch-compatible methods to prepare them for the inference process.

In your PyTorch workflow, deploy the model for inference, generating output images with precision and ease. This streamlined integration ensures a smooth and efficient utilization of DALL·E within your PyTorch-based projects.

PyTorch provides a dynamic computational graph, making it easy to define and modify neural network architectures on the fly. This flexibility is crucial for working with complex models like DALL·E, where experimentation and adaptation are common.

Diagrammatic Representation: DALL·E Model in PyTorch Project

Load DALL·E Model

|

v

+-----------------------------+

| Model Loaded |

+-----------------------------+

|

v

Define Input Transformations

|

v

+-----------------------------+

| Transform Defined |

+-----------------------------+

|

v

Instantiate Custom Dataset

|

v

+-----------------------------+

| Dataset Created |

+-----------------------------+

|

v

Choose Random Image

|

v

+-----------------------------+

| Image Chosen |

+-----------------------------+

|

v

Transform Input Image

|

v

+-----------------------------+

| Image Transformed |

+-----------------------------+

|

v

Generate Output Using DALL·E Model

|

v

+-----------------------------+

| Output Generated |

+-----------------------------+

|

v

Display/Save Output

|

v

+-----------------------------+

| Output Displayed/Saved |

+-----------------------------+

|

v

+-----------------------------+

| End |

+-----------------------------+

Conclusion

This guide provides a practical approach to training DALL·E on custom datasets. From dataset preparation and model training to image generation, we have explored some key steps with insightful code examples. By continuing to explore DALL·E’s capabilities, we can unlock the unlimited potential of AI-driven creativity and reshape the world of visual content for better.

Sources:

- https://www.assemblyai.com/blog/how-dall-e-2-actually-works/

- https://interestingengineering.com/innovation/what-is-dall-e-how-it-works-and-how-the-system-generates-ai-art

- https://medium.com/@zaiinn440/how-openais-dall-e-works-da24ac6c12fa

- https://www.geektime.com/dall-e-openais-new-neural-network-wonder/

- https://openai.com/research/dall-e-2-pre-training-mitigations

- https://docs.edgeimpulse.com/docs/tutorials/ml-and-data-engineering/generate-synthetic-datasets/generate-dall-e-image-dataset

- https://community.openai.com/t/training-openai-on-a-private-dataset/38601

- https://www.datacamp.com/tutorial/an-introduction-to-dalle3

- https://medium.com/@turc.raluca/fine-tuning-dall-e-mini-craiyon-to-generate-blogpost-images-32903cc7aa52

- https://edgeimpulse.com/blog/training-models-with-synthetic-data-openai-dall-e-image-generation

- https://blog.roboflow.com/opencv-ai-kit-deployment/

- https://blog.roboflow.com/synthetic-data-dall-e-roboflow/

- https://medium.com/@gdscadgitm/unleashing-creativity-with-dall-e-2-a-comprehensive-guide-865ec738177d

- https://www.geeksforgeeks.org/generate-images-with-openai-in-python/